Your new post is loading...

Your new post is loading...

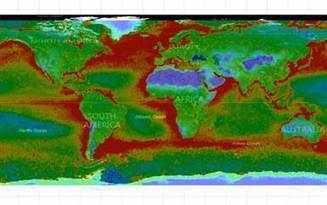

Recent years have witnessed an explosion of extensive geolocated datasets related to human movement, enabling scientists to quantitatively study individual and collective mobility patterns, and to generate models that can capture and reproduce the spatiotemporal structures and regularities in human trajectories. The study of human mobility is especially important for applications such as estimating migratory flows, traffic forecasting, urban planning, and epidemic modeling. In this survey, we review the approaches developed to reproduce various mobility patterns, with the main focus on recent developments. This review can be used both as an introduction to the fundamental modeling principles of human mobility, and as a collection of technical methods applicable to specific mobility-related problems. The review organizes the subject by differentiating between individual and population mobility and also between short-range and long-range mobility. Throughout the text the description of the theory is intertwined with real-world applications. Human Mobility: Models and Applications

Hugo Barbosa-Filho, Marc Barthelemy, Gourab Ghoshal, Charlotte R. James, Maxime Lenormand, Thomas Louail, Ronaldo Menezes, José J. Ramasco, Filippo Simini, Marcello Tomasini

Via Complexity Digest

This web collection showcases the potential of interdisciplinary complexity research by bringing together a selection of recent Nature Communications articles investigating complex systems. Complexity research aims to characterize and understand the behaviour and nature of systems made up of many interacting elements. Such efforts often require interdisciplinary collaboration and expertise from diverse schools of thought. Nature Communications publishes papers across a broad range of topics that span the physical and life sciences, making the journal an ideal home for interdisciplinary studies.

Via Complexity Digest

A potent theory has emerged explaining a mysterious statistical law that arises throughout physics and mathematics.

A couple months back, Mark Buchannan wrote an article in which he argued that ABMs might be a productive way of trying to understand the economy. In fact, he went a bit further – he said that ABMs...

A new theory can explain the formation of swarming patterns observed in ensembles of self-propelled polar particles.

Via Eugene Ch'ng

Melvyn Bragg and his guests discuss complexity and how it can help us understand the world around us. When living beings come together and act in a group, they do so in complicated and unpredictable ways: societies often behave very differently from the individuals within them. Complexity was a phenomenon little understood a generation ago, but research into complex systems now has important applications in many different fields, from biology to political science. Today it is being used to explain how birds flock, to predict traffic flow in cities and to study the spread of diseases.

Via NESS, Eugene Ch'ng

A few years ago, I started looking online to fill in chapters of my family history that no one had ever spoken of.

This was just a brilliant paper, talking about exactly what I found wrong with (yet) current computational models: http://t.co/pxP6MZMa7T

My preferred job title is 'theorist', but that is often too ambiguous in casual and non-academic conversation, so I often settle for 'computer scientist'. Unfortunately, it seems that the overwhelming majority of people equate ...

The severity of climate-related disasters is often measured by the number of lives lost, the financial losses for the economy or the costs of recovery. But how do disasters affect the poor? A new report from the Overseas Development Institute explores the relationship between disasters and poverty. The report highlights the potential for natural hazards to spiral into human catastrophes if they entrench poverty that already exists, by pulling vulnerable people down into the poverty trap as their assets and livelihoods vanish.

Via Eugene Ch'ng

For every increase of 5 micrograms per cubic metre in exposure during pregnancy, risk of low birthweight rises by 18% Babies born to mothers who live in areas with air pollution and dense traffic are more likely to have a low birthweight and...

Microsoft Research and UN scientists are embarking on a highly ambitious project: A computational model of an entire ecosystem, from the soil to the creatures that live on it and interact with it...

|

Biological organisms must perform computation as they grow, reproduce, and evolve. Moreover, ever since Landauer's bound was proposed it has been known that all computation has some thermodynamic cost -- and that the same computation can be achieved with greater or smaller thermodynamic cost depending on how it is implemented. Accordingly an important issue concerning the evolution of life is assessing the thermodynamic efficiency of the computations performed by organisms. This issue is interesting both from the perspective of how close life has come to maximally efficient computation (presumably under the pressure of natural selection), and from the practical perspective of what efficiencies we might hope that engineered biological computers might achieve, especially in comparison with current computational systems. Here we show that the computational efficiency of translation, defined as free energy expended per amino acid operation, outperforms the best supercomputers by several orders of magnitude, and is only about an order of magnitude worse than the Landauer bound. However this efficiency depends strongly on the size and architecture of the cell in question. In particular, we show that the {\it useful} efficiency of an amino acid operation, defined as the bulk energy per amino acid polymerization, decreases for increasing bacterial size and converges to the polymerization cost of the ribosome. This cost of the largest bacteria does not change in ancells as we progress through the major evolutionary shifts to both single and multicellular eukaryotes. However, the rates of total computation per unit mass are nonmonotonic in bacteria with increasing cell size, and also change across different biological architectures including the shift from unicellular to multicellular eukaryotes. The thermodynamic efficiency of computations made in cells across the range of life

Christopher P. Kempes, David Wolpert, Zachary Cohen, Juan Pérez-Mercader

Via Complexity Digest

Complex systems may have billion components making consensus formation slow and difficult. Recently several overlapping stories emerged from various disciplines, including protein structures, neuroscience and social networks, showing that fast responses to known stimuli involve a network core of few, strongly connected nodes. In unexpected situations the core may fail to provide a coherent response, thus the stimulus propagates to the periphery of the network. Here the final response is determined by a large number of weakly connected nodes mobilizing the collective memory and opinion, i.e. the slow democracy exercising the 'wisdom of crowds'. This mechanism resembles to Kahneman's "Thinking, Fast and Slow" discriminating fast, pattern-based and slow, contemplative decision making. The generality of the response also shows that democracy is neither only a moral stance nor only a decision making technique, but a very efficient general learning strategy developed by complex systems during evolution. The duality of fast core and slow majority may increase our understanding of metabolic, signaling, ecosystem, swarming or market processes, as well as may help to construct novel methods to explore unusual network responses, deep-learning neural network structures and core-periphery targeting drug design strategies. Fast and slow thinking -- of networks: The complementary 'elite' and 'wisdom of crowds' of amino acid, neuronal and social networks

Peter Csermely http://arxiv.org/abs/1511.01238 ;

Via Complexity Digest

To determine geographic range for Ebola virus, we tested 276 bats in Bangladesh. Five (3.5%) bats were positive for antibodies against Ebola Zaire and Reston viruses; no virus was detected by PCR. These bats might be a reservoir for Ebola or Ebola-like viruses, and extend the range of filoviruses to mainland Asia.

ALIFE 14, the Fourteenth International Conference on the Synthesis and Simulation of Living Systems, presents the current state of the art of Artificial Life—the highly interdisciplinary research area on artificially constructed living systems, including mathematical, computational, robotic, and biochemical ones. The understanding and application of such generalized forms of life, or “life as it could be,” have been producing significant contributions to various fields of science and engineering.

This volume contains papers that were accepted through rigorous peer reviews for presentations at the ALIFE 14 conference. The topics covered in this volume include: Evolutionary Dynamics; Artificial Evolutionary Ecosystems; Robot and Agent Behavior; Soft Robotics and Morphologies; Collective Robotics; Collective Behaviors; Social Dynamics and Evolution; Boolean Networks, Neural Networks and Machine Learning; Artificial Chemistries, Cellular Automata and Self-Organizing Systems; In-Vitro and In-Vivo Systems; Evolutionary Art, Philosophy and Entertainment; and Methodologies. Artificial Life 14 Proceedings of the Fourteenth International Conference on the Synthesis and Simulation of Living Systems Edited by Hiroki Sayama, John Rieffel, Sebastian Risi, René Doursat and Hod Lipson http://mitpress.mit.edu/books/artificial-life-14

Via Complexity Digest

Our empirical analysis demonstrates that in the chosen network data sets, nodes which had a high Closeness Centrality also had a high Eccentricity Centrality. Likewise high Degree Centrality also correlated closely with a high Eigenvector Centrality. Whereas Betweenness Centrality varied according to network topology and did not demonstrate any noticeable pattern. In terms of identification of key nodes, we discovered that as compared with other centrality measures, Eigenvector and Eccentricity Centralities were better able to identify important nodes. Batool K, Niazi MA (2014) Towards a Methodology for Validation of Centrality Measures in Complex Networks. PLoS ONE 9(4): e90283. http://dx.doi.org/10.1371/journal.pone.0090283

Via Complexity Digest

The NHS could be run more effectively if senior decision makers used simulation software to test the outcome of different approaches before rolling them out, according to a report out today.

Via Eugene Ch'ng

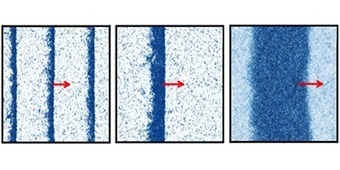

The global spread of epidemics, rumors, opinions, and innovations are complex, network-driven dynamic processes. The combined multiscale nature and intrinsic heterogeneity of the underlying networks make it difficult to develop an intuitive understanding of these processes, to distinguish relevant from peripheral factors, to predict their time course, and to locate their origin. However, we show that complex spatiotemporal patterns can be reduced to surprisingly simple, homogeneous wave propagation patterns, if conventional geographic distance is replaced by a probabilistically motivated effective distance. In the context of global, air-traffic–mediated epidemics, we show that effective distance reliably predicts disease arrival times. Even if epidemiological parameters are unknown, the method can still deliver relative arrival times. The approach can also identify the spatial origin of spreading processes and successfully be applied to data of the worldwide 2009 H1N1 influenza pandemic and 2003 SARS epidemic. The Hidden Geometry of Complex, Network-Driven Contagion Phenomena

Dirk Brockmann, Dirk Helbing Science 13 December 2013:

Vol. 342 no. 6164 pp. 1337-1342

http://dx.doi.org/10.1126/science.1245200

Via Complexity Digest

The changes we're seeing just aren't normal.

Social systems are among the most complex known. This poses particular problems for those who wish to understand them. The complexity often makes analytic approaches infeasible and natural language approaches inadequate for relating intricate cause and effect. However, individual- and agent-based computational approaches hold out the possibility of new and deeper understanding of such systems. Simulating Social Complexity examines all aspects of using agent- or individual-based simulation. This approach represents systems as individual elements having each their own set of differing states and internal processes. The interactions between elements in the simulation represent interactions in the target systems. What makes these elements "social" is that they are usefully interpretable as interacting elements of an observed society. In this, the focus is on human society, but can be extended to include social animals or artificial agents where such work enhances our understanding of human society. This handbook is intended to help in the process of maturation of this new field. It brings together, through the collaborative effort of many leading researchers, summaries of the best thinking and practice in this area and constitutes a reference point for standards against which future methodological advances are judged.

Via Complexity Digest

Biotech, farmer associations key for climate adaptation - panel Reuters AlertNet (blog) LONDON (Thomson Reuters Foundation) - An increasingly extreme climate is presenting new challenges to farmers across the world, and biotechnology and greater...

Cancer costs countries in the European Union 126bn euro (£107bn) a year, according to the first EU-wide analysis of the economic impact of the disease.

|

Suggested by

Jed Fisher

|

Diesel exhaust is pretty nasty stuff. Pass an overloaded 18-wheeler clouding up the highway, and that acrid plume of hydrocarbons will overpower even your best little tree air freshener.

|

Your new post is loading...

Your new post is loading...