Your new post is loading...

Your new post is loading...

Recent years have witnessed an explosion of extensive geolocated datasets related to human movement, enabling scientists to quantitatively study individual and collective mobility patterns, and to generate models that can capture and reproduce the spatiotemporal structures and regularities in human trajectories. The study of human mobility is especially important for applications such as estimating migratory flows, traffic forecasting, urban planning, and epidemic modeling. In this survey, we review the approaches developed to reproduce various mobility patterns, with the main focus on recent developments. This review can be used both as an introduction to the fundamental modeling principles of human mobility, and as a collection of technical methods applicable to specific mobility-related problems. The review organizes the subject by differentiating between individual and population mobility and also between short-range and long-range mobility. Throughout the text the description of the theory is intertwined with real-world applications. Human Mobility: Models and Applications

Hugo Barbosa-Filho, Marc Barthelemy, Gourab Ghoshal, Charlotte R. James, Maxime Lenormand, Thomas Louail, Ronaldo Menezes, José J. Ramasco, Filippo Simini, Marcello Tomasini

Via Complexity Digest

CompleNet is an international conference that brings together researchers and practitioners from diverse disciplines—from sociology, biology, physics, and computer science—who share a passion to better understand the interdependencies within and across systems. CompleNet is a venue to discuss ideas and findings about all types networks, from biological, to technological, to informational and social. It is this interdisciplinary nature of complex networks that CompleNet aims to explore and celebrate. CompleNet 2018 - 9th Conference on Complex Networks Boston (MA, US) March 5-8, 2018 www.complenet.org

Via Complexity Digest

Biological organisms must perform computation as they grow, reproduce, and evolve. Moreover, ever since Landauer's bound was proposed it has been known that all computation has some thermodynamic cost -- and that the same computation can be achieved with greater or smaller thermodynamic cost depending on how it is implemented. Accordingly an important issue concerning the evolution of life is assessing the thermodynamic efficiency of the computations performed by organisms. This issue is interesting both from the perspective of how close life has come to maximally efficient computation (presumably under the pressure of natural selection), and from the practical perspective of what efficiencies we might hope that engineered biological computers might achieve, especially in comparison with current computational systems. Here we show that the computational efficiency of translation, defined as free energy expended per amino acid operation, outperforms the best supercomputers by several orders of magnitude, and is only about an order of magnitude worse than the Landauer bound. However this efficiency depends strongly on the size and architecture of the cell in question. In particular, we show that the {\it useful} efficiency of an amino acid operation, defined as the bulk energy per amino acid polymerization, decreases for increasing bacterial size and converges to the polymerization cost of the ribosome. This cost of the largest bacteria does not change in cells as we progress through the major evolutionary shifts to both single and multicellular eukaryotes. However, the rates of total computation per unit mass are nonmonotonic in bacteria with increasing cell size, and also change across different biological architectures including the shift from unicellular to multicellular eukaryotes. The thermodynamic efficiency of computations made in cells across the range of life

Christopher P. Kempes, David Wolpert, Zachary Cohen, Juan Pérez-Mercader

Via Complexity Digest

In this paper we present a thorough analysis of the nature of news in different mediums across the ages, introducing a unique mathematical model to fit the characteristics of information spread. This model enhances the information diffusion model to account for conflicting information and the topical distribution of news in terms of popularity for a given era. We translate this information to a separate graphical node model to determine the spread of a news item given a certain category and relevance factor. The two models are used as a base for a simulation of information dissemination for varying graph topoligies. The simulation is stress-tested and compared against real-world data to prove its relevancy. We are then able to use these simulations to deduce some conclusive statements about the optimization of information spread. Characterizing information importance and the effect on the spread in various graph topologies

James Flamino, Alexander Norman, Madison Wyatt

Via Complexity Digest

Network science offers a powerful language to represent and study complex systems composed of interacting elements from the Internet to social and biological systems. In its standard formulation, this framework relies on the assumption that the underlying topology is static, or changing very slowly as compared to dynamical processes taking place on it, e.g., epidemic spreading or navigation. Fuelled by the increasing availability of longitudinal networked data, recent empirical observations have shown that this assumption is not valid in a variety of situations. Instead, often the network itself presents rich temporal properties and new tools are required to properly describe and analyse their behaviour.A Guide to Temporal Networks presents recent theoretical and modelling progress in the emerging field of temporally varying networks, and provides connections between different areas of knowledge required to address this multi-disciplinary subject. After an introduction to key concepts on networks and stochastic dynamics, the authors guide the reader through a coherent selection of mathematical and computational tools for network dynamics. Perfect for students and professionals, this book is a gateway to an active field of research developing between the disciplines of applied mathematics, physics and computer science, with applications in others including social sciences, neuroscience and biology.

Via Complexity Digest

Computer models can help humans gain insight into the functioning of complex systems. Used for training, they can also help gain insight into the cognitive processes humans use to understand these systems. By influencing humans understanding (and consequent actions) computer models can thus generate an impact on both these actors and the very systems they are designed to simulate. When these systems also include humans, a number of self-referential relations thus emerge which can lead to very complex dynamics. This is particularly true when we explicitly acknowledge and model the existence of multiple conflicting representations of reality among different individuals. Given the increasing availability of computational devices, the use of computer models to support individual and shared decision making could potentially have implications far wider than the ones often discussed within the Information and Communication Technologies community in terms of computational power and network communication. We discuss some theoretical implications and describe some initial numerical simulations. Models and people: An alternative view of the emergent properties of computational models

Fabio Boschetti Complexity Volume 21, Issue 6

July/August 2016

Pages 202–213 http://dx.doi.org/10.1002/cplx.21680

Via Complexity Digest

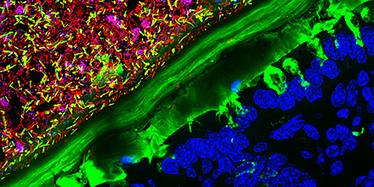

This 63X photograph shows a mouse colon colonized with human microbiota. It won second place in the 2015 Nikon Small World Photomicrophotography Competition, which recognizes excellence in photography with the optical microscope and was taken using confocal microscopy.

Accessing information efficiently is vital for animals to make the optimal decisions, and it is particularly important when they are facing predators. Yet until now, very few quantitative conclusions have been drawn about the information dynamics in the interaction between animals due to the lack of appropriate theoretic measures. Here, we employ transfer entropy (TE), a new information-theoretic and model-free measure, to explore the information dynamics in the interaction between a predator and a prey fish. We conduct experiments in which a predator and a prey fish are confined in separate parts of an arena, but can communicate with each other visually and tactilely. TE is calculated on the pair’s coarse-grained state of the trajectories. We find that the prey’s TE is generally significantly bigger than the predator’s during trials, which indicates that the dominant information is transmitted from predator to prey. We then demonstrate that the direction of information flow is irrelevant to the parameters used in the coarse-grained procedures. We further calculate the prey’s TE at different distances between it and the predator. The resulted figure shows that there is a high plateau in the mid-range of the distance and that drops quickly at both the near and the far ends. This result reflects that there is a sensitive space zone where the prey is highly vigilant of the predator’s position. Information Dynamics in the Interaction between a Prey and a Predator Fish

Feng Hu, Li-Juan Nie and Shi-Jian Fu Entropy 2015, 17(10), 7230-7241; http://dx.doi.org/10.3390/e17107230 ;

Via Complexity Digest, Phillip Trotter

Accessing information efficiently is vital for animals to make the optimal decisions, and it is particularly important when they are facing predators. Yet until now, very few quantitative conclusions have been drawn about the information dynamics in the interaction between animals due to the lack of appropriate theoretic measures. Here, we employ transfer entropy (TE), a new information-theoretic and model-free measure, to explore the information dynamics in the interaction between a predator and a prey fish. We conduct experiments in which a predator and a prey fish are confined in separate parts of an arena, but can communicate with each other visually and tactilely. TE is calculated on the pair’s coarse-grained state of the trajectories. We find that the prey’s TE is generally significantly bigger than the predator’s during trials, which indicates that the dominant information is transmitted from predator to prey. We then demonstrate that the direction of information flow is irrelevant to the parameters used in the coarse-grained procedures. We further calculate the prey’s TE at different distances between it and the predator. The resulted figure shows that there is a high plateau in the mid-range of the distance and that drops quickly at both the near and the far ends. This result reflects that there is a sensitive space zone where the prey is highly vigilant of the predator’s position. Information Dynamics in the Interaction between a Prey and a Predator Fish

Feng Hu, Li-Juan Nie and Shi-Jian Fu Entropy 2015, 17(10), 7230-7241; http://dx.doi.org/10.3390/e17107230 ;

Via Complexity Digest

Nature’s large-scale patterns emerge from incomplete surveys, thanks to ideas borrowed from information theory.

Growing nerve tissue and organs is a sci-fi dream. Moheb Costandi met the pioneering researcher who grew eyes and brain cells.

As humans transform ecosystems and come into closer contact with animals, scientists fear more viral epidemics

Our empirical analysis demonstrates that in the chosen network data sets, nodes which had a high Closeness Centrality also had a high Eccentricity Centrality. Likewise high Degree Centrality also correlated closely with a high Eigenvector Centrality. Whereas Betweenness Centrality varied according to network topology and did not demonstrate any noticeable pattern. In terms of identification of key nodes, we discovered that as compared with other centrality measures, Eigenvector and Eccentricity Centralities were better able to identify important nodes. Batool K, Niazi MA (2014) Towards a Methodology for Validation of Centrality Measures in Complex Networks. PLoS ONE 9(4): e90283. http://dx.doi.org/10.1371/journal.pone.0090283

Via Complexity Digest

|

Poor economies not only produce less; they typically produce things that involve fewer inputs and fewer intermediate steps. Yet the supply chains of poor countries face more frequent disruptions---delivery failures, faulty parts, delays, power outages, theft, government failures---that systematically thwart the production process. To understand how these disruptions affect economic development, we model an evolving input--output network in which disruptions spread contagiously among optimizing agents. The key finding is that a poverty trap can emerge: agents adapt to frequent disruptions by producing simpler, less valuable goods, yet disruptions persist. Growing out of poverty requires that agents invest in buffers to disruptions. These buffers rise and then fall as the economy produces more complex goods, a prediction consistent with global patterns of input inventories. Large jumps in economic complexity can backfire. This result suggests why "big push" policies can fail, and it underscores the importance of reliability and of gradual increases in technological complexity. Contagious disruptions and complexity traps in economic development

Charles D. Brummitt, Kenan Huremovic, Paolo Pin, Matthew H. Bonds, Fernando Vega-Redondo

Via Complexity Digest

How cooperation can evolve between players is an unsolved problem of biology. Here we use Hamiltonian dynamics of models of the Ising type to describe populations of cooperating and defecting players to show that the equilibrium fraction of cooperators is given by the expectation value of a thermal observable akin to a magnetization. We apply the formalism to the Public Goods game with three players, and show that a phase transition between cooperation and defection occurs that is equivalent to a transition in one-dimensional Ising crystals with long-range interactions. We also investigate the effect of punishment on cooperation and find that punishment acts like a magnetic field that leads to an "alignment" between players, thus encouraging cooperation. We suggest that a thermal Hamiltonian picture of the evolution of cooperation can generate other insights about the dynamics of evolving groups by mining the rich literature of critical dynamics in low-dimensional spin systems. Thermodynamics of Evolutionary Games

Christoph Adami, Arend Hintze

Via Complexity Digest

Biological organisms must perform computation as they grow, reproduce, and evolve. Moreover, ever since Landauer's bound was proposed it has been known that all computation has some thermodynamic cost -- and that the same computation can be achieved with greater or smaller thermodynamic cost depending on how it is implemented. Accordingly an important issue concerning the evolution of life is assessing the thermodynamic efficiency of the computations performed by organisms. This issue is interesting both from the perspective of how close life has come to maximally efficient computation (presumably under the pressure of natural selection), and from the practical perspective of what efficiencies we might hope that engineered biological computers might achieve, especially in comparison with current computational systems. Here we show that the computational efficiency of translation, defined as free energy expended per amino acid operation, outperforms the best supercomputers by several orders of magnitude, and is only about an order of magnitude worse than the Landauer bound. However this efficiency depends strongly on the size and architecture of the cell in question. In particular, we show that the {\it useful} efficiency of an amino acid operation, defined as the bulk energy per amino acid polymerization, decreases for increasing bacterial size and converges to the polymerization cost of the ribosome. This cost of the largest bacteria does not change in ancells as we progress through the major evolutionary shifts to both single and multicellular eukaryotes. However, the rates of total computation per unit mass are nonmonotonic in bacteria with increasing cell size, and also change across different biological architectures including the shift from unicellular to multicellular eukaryotes. The thermodynamic efficiency of computations made in cells across the range of life

Christopher P. Kempes, David Wolpert, Zachary Cohen, Juan Pérez-Mercader

Via Complexity Digest

We present a model of contagion that unifies and generalizes existing models of the spread of social influences and micro-organismal infections. Our model incorporates individual memory of exposure to a contagious entity (e.g., a rumor or disease), variable magnitudes of exposure (dose sizes), and heterogeneity in the susceptibility of individuals. Through analysis and simulation, we examine in detail the case where individuals may recover from an infection and then immediately become susceptible again (analogous to the so-called SIS model). We identify three basic classes of contagion models which we call \textit{epidemic threshold}, \textit{vanishing critical mass}, and \textit{critical mass} classes, where each class of models corresponds to different strategies for prevention or facilitation. We find that the conditions for a particular contagion model to belong to one of the these three classes depend only on memory length and the probabilities of being infected by one and two exposures respectively. These parameters are in principle measurable for real contagious influences or entities, thus yielding empirical implications for our model. We also study the case where individuals attain permanent immunity once recovered, finding that epidemics inevitably die out but may be surprisingly persistent when individuals possess memory. A generalized model of social and biological contagion

Peter Sheridan Dodds, Duncan J. Watts

Via Complexity Digest

John H. Holland's general theories of adaptive processes apply across biological, cognitive, social, and computational systems.

Few of us really understand the weird world of quantum physics – but our bodies might take advantage of quantum properties

Complex systems may have billion components making consensus formation slow and difficult. Recently several overlapping stories emerged from various disciplines, including protein structures, neuroscience and social networks, showing that fast responses to known stimuli involve a network core of few, strongly connected nodes. In unexpected situations the core may fail to provide a coherent response, thus the stimulus propagates to the periphery of the network. Here the final response is determined by a large number of weakly connected nodes mobilizing the collective memory and opinion, i.e. the slow democracy exercising the 'wisdom of crowds'. This mechanism resembles to Kahneman's "Thinking, Fast and Slow" discriminating fast, pattern-based and slow, contemplative decision making. The generality of the response also shows that democracy is neither only a moral stance nor only a decision making technique, but a very efficient general learning strategy developed by complex systems during evolution. The duality of fast core and slow majority may increase our understanding of metabolic, signaling, ecosystem, swarming or market processes, as well as may help to construct novel methods to explore unusual network responses, deep-learning neural network structures and core-periphery targeting drug design strategies. Fast and slow thinking -- of networks: The complementary 'elite' and 'wisdom of crowds' of amino acid, neuronal and social networks

Peter Csermely http://arxiv.org/abs/1511.01238 ;

Via Complexity Digest

Here’s how to cause a ruckus: Ask a bunch of naturalists to simplify the world. We usually think in terms of a web of complicated…

A potent theory has emerged explaining a mysterious statistical law that arises throughout physics and mathematics.

Where do a zebra’s stripes, a leopard’s spots and our fingers come from? The key was found years ago – by the man who cracked the Enigma code, writes Kat Arney.

The precise factors that result in an Ebola virus outbreak remain unknown, but a broad examination of the complex and interwoven ecology and socioeconomics may help us better understand what has already happened and be on the lookout for what might happen next, including determining regions and populations at risk. Although the focus is often on the rapidity and efficacy of the short-term international response, attention to these admittedly challenging underlying factors will be required for long-term prevention and control.

To determine geographic range for Ebola virus, we tested 276 bats in Bangladesh. Five (3.5%) bats were positive for antibodies against Ebola Zaire and Reston viruses; no virus was detected by PCR. These bats might be a reservoir for Ebola or Ebola-like viruses, and extend the range of filoviruses to mainland Asia.

|

Your new post is loading...

Your new post is loading...